cm3015 Topic 01: Introduction

Main Info

Title: Introduction to ML and NN

Teachers: Jamie Ward

Semester Taken: October 2021

Parent Module: cm3015 Machine Learning and Neural Networks

Description

This topic introduces the basic types of machine learning and looks at some of the various problems that machine learning approaches can help us to solve. It introduces the differences between supervised and unsupervised learning, reinforcement learning, and neural networks.

Assigned Reading

Supplementary Reading

Canonical Definition

a computer program is said to learn from experience E, with respect to some class of tasks T, and performance measure P, if its performance at tasks in T, as measured by P, improves with experience E. (Mitchell, TM, Machine Learning, 1997).

Lecture Summaries

1.101: The lecture defines ML as a branch of AI that allows machines to learn by example. It then presents example applications: e-passport gates using facial recognition (a type of object detection), body tracking, hand-written digit recognition, speech recognition, online translation. The quality and volume of data is a significant factor in the quality of the resulting system. Reviews the issues around driverless cars. Reviews recommender systems, common problem is that there is missing data, the algorithm tries to complete the data. Reviews generative models, eg generating faces. Looks at sensor-based activity recognition, where sensors take diverse inputs and infer various states (eg whether you are standing or sitting).

1.102: Types of ML. “We use ML because we want to learn from data rather than hard code a solution… in most of these cases we don’t know how to model the problem.” Eg facial recognition. If someone asked you to write a function that returned true if your face was present in an image, false otherwise, the implementation of that function would be v. difficul to specify. ML algorithms can learn how to implement that function.

There are two main types of ML problem:

supervised learning problems, where every sample x is associated with a label y and we try to learn mappings from x to y.

unuspervised learning deals witth cases where we simply observe a dataset that consists of examples of x, but with no labels. Perhaps we want to cluster the set of inputs into two subgroups.

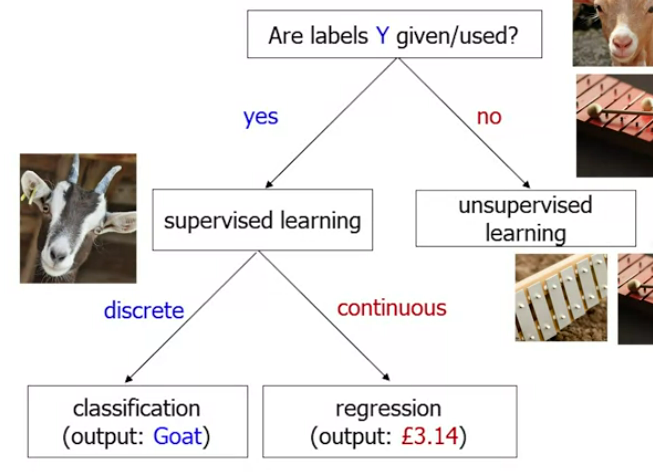

The lecture gives a taxonomy of ML problems based on whether the labels are given or not, and if so whether they are discrete or coninuous:

A third type of ML problem is a reinforcement learning problem, where we try to predict a sequence of actions that entail a specific reward. For example in Game Playing you perform a sequence of actions that result in a win or loss, that result can feed back into the process to change the actions and find out new ways of playing, learning to play better over time.

1.103: Presents a ML pipeline with a ‘black box’, where the training stage learns the mapping from input to labels. That mapping can be used on unseen data to apply the label to a new input. In supervised learning, often there is an unsupervised pre-processing stage, which might learn which features are needed for its labelling task.

Lab Summaries

The first lab introduces Jupyter notebooks, and links to a LaTeX cheatsheet. It also introduces Gist as a way to share snippets.

The second lab recaps basic Python - variables, loops, conditionals, containers (lists and dictionaries), and functions.

The third lab recaps NumPy with some NumPy usage examples.

The final lab recaps matplotlib with some matplotlib usage examples