The Structure of Agents (Russell Norvig)

Source

Russell Norvig Chapter 02: Intelligent Agents

pp. 65 - 78

Outline

The job of AI is to design an agent program that implements the agent function - the mapping from percepts to actions. We assume this program will run on some sort of computing device with physical sensors and actuators - we call this the agent architecture:

agent = architecture + program

The program we choose has to fit the architecture, the architecture might be a PC, robot, car etc. In general the architecture makes the percepts from the sensors available to the program, runs the program, and feeds the program’s action choices to the actuators as they are generated.

The book focuses on designing programs, rather than architectures. The section describes four basic kinds of agent programs:

Simple reflex agents

Model-based reflex agents

Goal-based agents

Utility-based agents

The book has several useful diagrams for these models.

The model for agent programs

The basic model for agent programs in the book is a synchronous one. The current percept is taken as input from the sensors, and an action is returned to the actuators. This contrasts with an asynchronous model where the agent runs in parallel with the environment as coroutines, with each coroutine having input and output ports and a loop to poll for percepts/actions.

A false start

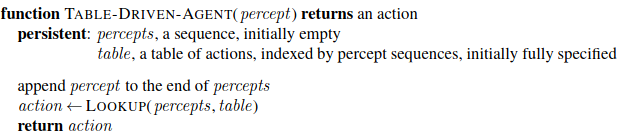

Let’s start with an approach that implements the agent function by means of a lookup table:

This does do what we want, if the table is correctly filled in, but it is doomed to failure.

Let P be the set of possible percepts, and T the lifetime of the agent. Then the lookup table needs to contain \(\sum^{T}_{t=1} |P|^t\) entries.

Even the lookup table for chess has \(10^{150}\) entries. The number of atoms in the known universe is less than \(10^{80}\).

The key challenge for AI is to find out how to write programs that, to the extent possible, produce rational behavior from a smallish program rather than from a vast table. (p. 67)

Analogy with square roots, the huge tables of square roots are now replaced with a five line program for Newton’s method. Can AI do the same for general intelligent behaviour?

Simple Reflex Agents

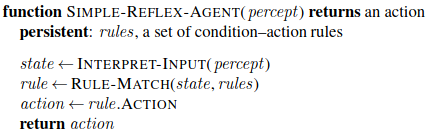

The simplest kind of agent is the simple reflex agent, which select actions based on the current percept, ignoring the rest of the percept history.

Here’s a general model:

The description of rules and matching is purely conceptual, it could be as simple as a collection of logic gates, or a ‘neural’ circuit.

These types of agent are admirably simple but of limited intelligence, they will work only if the correct decision can be made on the basis of just the current percept - that is, if the environment is fully observable.

Even a limited amount of unobservability can cause problems. Consider the vacuum without the ability to sense its location. What does it do if the current square is clean?

Randomized behaviour might help escape infinite loops, but not likely to lead to rational actions in single-agent environments.

Model-based Reflex Agents

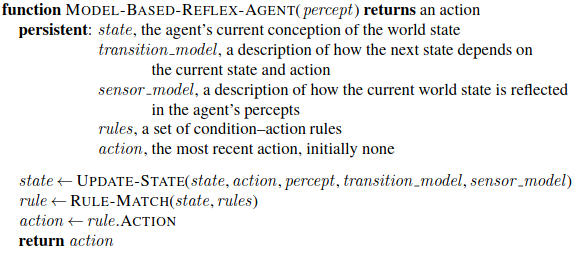

The most effective way to handle partial observability is for the agent to keep track of the part of the world that it can’t see now.

That is, the agent must maintain some kind of internal state that depends on the percept history.

Updating the internal state action as time goes by requires two kinds of knowledge to be encoded in the agent program up front:

Some kind of knowledge about how the world changes over time: The effects of the agent’s actions and how the world changes independently of them. This is called a transition model of the world.

Some knowledge of how the state of the world is reflected in the agent’s percepts. For example when the camera gets wet droplet-shaped objects will appear. This kind of knowledge is called a sensor model.

Together, the transition model and the sensor model allow the agent to keep track of the state of the world. An agent using such models is called a model-based agent. Uncertainty about the state of the world may be unavoidable, but the agent has to make a best guess based on its percepts and make a decision. Here’s a general function for a model-based agent:

Goal-based Agents

Knowing something about the state of the world isn’t always enough to know what to do. For example, deciding whether to turn left or right at a junction while driving depends on the agent’s goal information that describes situations that are desirable.

Sometimes goal satisfaction is straightforward, if an action immediately brings about the goal. Otherwise it may depend on a long sequence of actions. Search and planning are the subfields of AI devoted to finding action sequences that achieve an agent’s goals.

We have a fundamental break here from reflex agents, which map directly from percepts to actions. A reflex agent breaks when it sees red lights, it does not know why. A goal-based agent brakes when it sees red lights because that’s the only action it predicts will achieve its goal of not crashing.

This can seem inefficient, but it’s also flexible. Goals can be substituted.

Utility-based Agents

Goals are not by themselves enough to generate complex, high quality behaviour. They just provide crude, binary distinctions between “happy” and “unhappy” outcomes. A car does not just need to reach its destination, it might need to so quickly, or safely, or cheaply, and trade these off.

A more general performance measure allows a comparison of different world states according to how happy they would make the agent. Rather than “happy” we use the term utility.

An agent’s utility function is an internalization of the external performance measure. If the agent’s internal utility function and the designer’s external performance measure align, and the agent chooses to maximize its utility, it will be rational according to the external measure.

In two kinds of cases goals are inadequate but a utility-based agent can still make rational decisions:

When there are conflicting goals, only some of which can be achieved (speed vs safety), the utility function can specify the tradeoff.

When there are several goals that the agent can aim for, but none can be achieved with certainty, the utility function provides a way in which the likelihood of success can be weighed against the goals’ importance.

Given partial observability and nondeterminism, an agent is actually choosing actions that maximize the expected utility of their outcomes, rather than their actual utility.

This is not a simple model to build, agents have to model and keep track of their environment, tasks that have produced extensive research on perception, reprsentation, reasoning, and learning. Choosing utility maximizing actions is also a difficult algorithmic challenge.

Learning Agents

How can we build such complex agents? From the original Turing paper, the goal has been to build learning machines and then teach them, rather than programming them by hand.

A learning agent can be divided into four conceptual components… The most important distinction is between the learning element, which is responsible for making improvements, and the performance element, which is responsible for selecting external actions. (p. 74)

The performance element is what has been describe as the agent up to this point. The learning element uses feedback from the critic on how the agent is doing and determines how the performance element should be modified to do better in the future. The critic tells the learning element how well the agent is doing with respect to a fixed performance standard. The critic is needed as the percepts themselves don’t indicate the agent’s success. The performance standard must be fixed and outside the agent altogether, so that the agent doesn’t modify it to fit its behaviour. It distinguishes part of the incoming percept as a reward (or penalty) for the agent to learn how its behaviour should change.

The design of the learning element depends very much on the design of the performance element. When trying to design an agent that learns a certain capability, the first question is not “How am I going to get it to learn this?” but “What kind of performance element will my agent use to do this once it has learned how?” Given a design for the performance element, learning mechanisms can be constructed to improve every part of the agent. (p. 75)

The final component of the learning agent is the problem generator which suggests actions that will lead to new and informative experiences.

The learning element can make changes to any of the “knowledge” components of the agents. They might learn directly from the percept sequence. For example a car braking in wet conditions might learn how much deceleration is achieved. The problem generator will identify gaps in the knowledge base and suggest experiments, like trying different degrees of braking pressure in different conditions.

Representations of the Environment

The section concludes with a categorization of different ways in which components of the agent may represent the environment the agent inhabits.

In increasing order of expressiveness the categories are:

atomic representation: Each state of the world is indivisible, with no internal structure. A single atom of knowledge, or “black box”, whose only distinction is being identical or not with another black box. The standard algorithms underlying search and game-playing, hidden Markov models, and Markov decision processes work with atomic representations.

factored representation: Each state is split into a fixed set of variables or attributes, each of which can have a value. Values can be Boolean, real numbered, or symbolic. States may share some attributes but not others. Many important areas of AI are based on factored representations, like constraint satisfaction, propositional logic, planning, Bayesian networks, and some ML algorithms.

structured representation: expresses the relations between objects in the world, not just variables with values. For example a car sees a lorry reversing towards a cow. It is unlikely to have a predefined attibute-value pair to express this. Structured representations underlie relational databases, first-order logic, first-order probability, and much of natural language understanding. “much of what humans express in natural language concerns objects and their relationships”.

The more expressive representations can be much more concise, but reasoning and learning become more complex as the expressive power increases. So agents in the real world may need to operate at all points along this expressiveness axis simultaneously.

Finally the chapter presents another axis of representations, the mapping of concepts to locations in physical memory. This can either be localist, when there is a one-to-one mapping between concepts and memory locations, or distributed if the representation of a concept is spread over many memory locations and each location is employed as part of the representation of multiple different concepts.

Distributed representations are more robust against noise and info loss, each point is in multidimensional space, so if you miss one, you’ll end up in a similar location.