Search Algorithms (Russell Norvig)

As presented in Russell Norvig Chapter 03: Solving Problems by Searching

Related to Search Problems

Definitions

A search algorithm takes a search problem as input and returns as solution, or an indication of failure. In this chapter we consider algorithms that impose a search tree over the state space graph, forming various paths from the initial state, trying to find a path that reaches a goal state. Each node in the search tree corresponds to a state in the state space and the edges in the search tree correspond to actions. The root of the tree corresponds to the initial state of the problem.

Note that the search tree and the state space are different. The state space is the (possibly infinite) set of all states in the world, and the actions that transition from one state to another. The search tree describes paths between these states, reaching towards the goal. The search tree may have multiple paths to (and so nodes for) any given state.

The search process involves expanding nodes in the search tree, starting with the root (the initial state). To expand a node, we consider the available Actions for that state, using the Result function to see where those actions lead to, and generate a new child or successor node for each of the resulting states.

The set of unexpanded nodes in the search tree is its frontier. We have reached all the nodes generated, even those not yet expanded.

The essence of search is how we choose which of the child nodes to consider next - we follow up one option and put the others aside for later.

Best-first search

Best-first search is a very general approach to the question of how we decide which node to consider next.

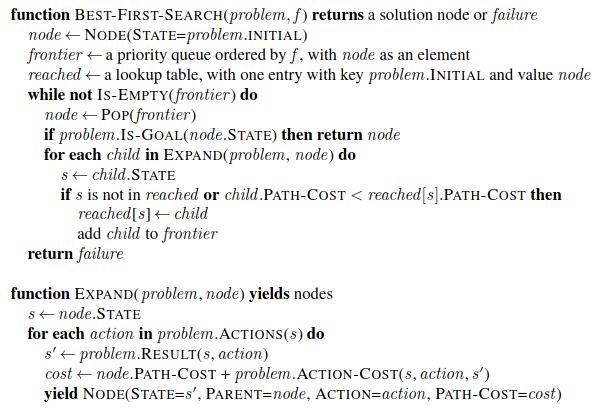

In best-first search we choose a node n with minimum value of some evaluation function \(f(n)\). Here is the pseudocode:

We choose a node on the frontier with minimum \(f(n)\), return it if its state is a goal state, otherwise Expand it to generate child nodes. By employing different \(f(n)\) functions, we get different search algorithms.

Search data structures

A node in the tree has the following components:

node.

STATE- the state to which the node correspondsnode.

PARENT- the node in the tree that generated this nodenode.

ACTION- the action that was applied to the parent’s state to generate this nodenode.

PATH-COST- the total cost of the path from the initial state to this node. Often referred to as \(g(node)\) in math models.

The frontier is often stored in a queue. It has the following operations:

Is-Empty(frontier)returns true if there are no nodes in the frontierPop(frontier)removes the top node and returns itTop(frontier)returns the top node without removalAdd(node, frontier)inserts the node into its proper place

Three kinds of queue are used in search algorithms:

A priority queue pops the node with the minimum cost according to f - used in best-first search

A FIFO queue pops the node that was added first - used in breadth-first search

A LIFO queue (or stack) pops the last node added - used in depth-first search

Reached states can be stored in a hash table, the keys are states, and the value is the node for that state.

Redundant Paths

In many cases search trees can visit the same state multiple times, in which case they are repeated states. The repeated state might be generated by a cycle. Think of a route-finding algorithm that doubles back on itself - the complete search tree is infinite. A cycle is a special case of redundant path. Often there will be a very high number of redundant paths, and if we can eliminate them we can complete the search much faster. This is captured in the saying algorithms that cannot remember the past are doomed to repeat it. There are three approaches to redundant paths:

Remember all previously reached states - Like the best-first search algorithm above. We keep only the best path to each state. We can use this when there are many redundant paths, and when the table of reached states will fit in memory.

Don’t worry about it - Some problem formulations don’t require us to worry about the past. EG in an assembly line where you add part A to part B but it isn’t possible to do the other way round. We call a search algorithm a tree-like search if it doesn’t check for redundant paths (otherwise it is a graph search).

Just track cycles - We might just look at cycles by following parent chains, rather than look for all redundant paths by keeping a separate table. This saves memory. We might just look a few generations back to stick with constant time and eliminate only short cycles, or go all the way back to the root and eliminate all cycles.

Measuring Performance

We can evaluate a search algorithm’s performance in four ways:

Completeness - Is the algorithm guaranteed to find a solution when there is one, and to correctly report failure when there is not?

Cost optimality - Does it find a solution with the lowest path cost of all solutions?

Time complexity

Space complexity

Completeness is the most interesting of these. In finite state spaces, we can keep track of paths, eliminate cycles, and be sure that we can reach every reachable state from the start state. But what about infinite state spaces? Eg on an infinite grid, we might move infinitely in a straight line, never returning a revisited state, but also never reaching wide expanses of the space.

To be complete, a search algorithm must be systematic in the way that it explores an infinite space, making sure that it will eventually reach any state connected to the start state. Eg, it might spiral from the start state in the grid example. In an infinite space with no solution, the sound algorithm would keep searching forever. It can’t terminate because the next state could be its goal.