Breadth-first Search (Russell Norvig)

Definition

A Search Algorithm that adopts an Uninformed Search Strategy:

When all actions have the same cost, an appropriate strategy is breadth-first search, in which the root node is expanded first, then all successors of the root node are expanded next, then their successors, and so on. This is a systematic search strategy that is therefore complete even on infinite state spaces. (p. 94)

Implementation

We could implement breadth-first search as a call to Best-first-search where the evaluation function f(n) is the depth of the node - that is the number of actions it takes to reach the node.

There are a couple of efficiency gains possible however:

Implement a FIFO queue rather than a priority queue.

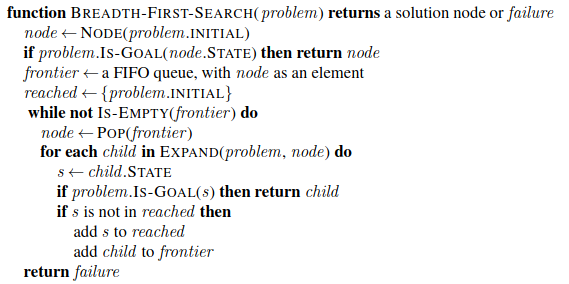

Implement

reachedas a simple set rather than a mapping of states to nodes, as once a state has been reached there is never a more efficient path to it.

This means we can implement an early goal test, checking whether a node is a solution as soon as it is generated rather than the late goal test that best-first search uses, waiting until a node is popped off the queue.

Pseudocode

Properties and Performance

Breadth-first search always finds a solution with the minimal number of actions. When it is generating nodes at depth d it has already generated all the nodes at depth \(d - 1\), so if one of those were a solution it would have been found.

This means if all actions have the same cost, BFS is cost-optimal, but it won’t be if that is not the case. BFS is, however, complete in both cases.

Regarding time and space performance, they consider searching a uniform tree where every state has b successors. The root expands to b, then each of those to b, giving \(b^2\), and so on. If a solution has a depth of \(d\) the number of nodes generated is \(O(b^d)\).

All the nodes remain in memory, so both time and space complexity are \(O(b^d)\). This is exponential growth and so scary: a branching factor of 10, with a solution depth of 10 and a memory requirement of 1 Kbyte/node would require 10 terabytes of memory.

The memory requirements are a bigger problem for breadth-first search than the execution time.

But time is still a limiting factor, with a depth of 14, the search might take 3.5 years processing 1 million nodes/second. Hence the warning about uninformed searches and exponential complexity problems.