Jurafsky Martin Chapter 02: Regular Expressions, Text Normalization, Edit Distance

Metadata

Title: Regular Expressions, Text Normalization, Edit Distance

Number: 2

Assigned reading in cm3060 Topic 02: Basic Processing

Coverage

The chapter introduces pattern-based natural language processing, introducing the topic with Eliza, the early chatbot from (Weizenbaum 1966). The main topics covered are regular expressions, text normalization, and edit distance.

Only the section on regular expressions is assigned as module reading, the other topics are covered by reading assignments from other books.

Regular Expressions

Formally, a regular expression is an algebraic notation for characterizing a set of strings.

The chapter introduces regexes quite informally through use in search, rather than as anything related to Finite Automata or regular languages.

It sets out the basics of regex:

| Pattern | Match |

|---|---|

| /[abc]/ | ‘a’, ‘b’, or ‘c’ |

| /[A-Z]/ | an upper case letter |

| /[a-z]/ | a lower case letter |

| /[0-9]/ | any digit |

| /[^A-Z]/ | not an upper case letter |

| /[e^]/ | either ‘e’ or ‘^’ |

| /wood?/ | wood or woo |

| /colou?r/ | color or colour |

| /[ab]*/ | zero or more ‘a’s or ‘b’s (greedy) |

| /[ab]*?/ | zero or more ‘a’s or ‘b’s (non-greedy) |

| /[ab]+/ | one or more ‘a’s or ‘b’s (greedy) |

| /[ab]+?/ | one or more ‘a’s or ‘b’s (non-greedy) |

| /beg.n/ | any character between ‘beg’ and ‘n’ |

| ^ | start of line |

| \$ | end of line |

| \b | word boundary |

| \B | non-word boundary |

| /\b99\b/ | 99 as a freestanding word (but not 299) |

| ❘ | disjunction operator |

| /cat❘dog/ | cat or dog |

| /gupp(y❘ies)/ | guppy or guppies |

| \d | any digit |

| \D | any non-digit |

| \w | any alphanumeric/underscore |

| \W | a non-alphanumeric |

| \s | whitespace |

| § | non-whitespace |

| {n} | exactly n occurences of the previous char or expression |

| {n,m} | between n and m occurrences |

| {n,} | at least n occurences |

| {,m} | at most m occurences |

| \ | escape special character (eg \*) for ‘*’ |

| /(?= pattern)/ | look ahead to check a match but do not advance cursor |

| /(?! pattern)/ | negative look ahead - used to rule out a special case in a complex pattern |

| /^(?!Volcano)[A-Za-z]+/ | match, at the beginning of a line, any single word that doesn’t start with “Volcano” |

And outlines the following precedence hierarchy:

- Parenthesis: ()

- Counters: * + ? {}

- Sequences and anchors: the ^my end$

- Disjunction: |

Substitutions and capture groups

The substitution operator: s/regexp1/pattern/ is used in Python and unix commands like sed to replace a regex match with another string. For example s/colour/color/

This becomes particularly powerful when we exploit capture groups. When we surround an expression with parentheses the resulting match is stored in a register, and can be referred to using the number operator, for example \1, to refer to the first capture group.

So for example, this regex will wrap all integers in angle brackets: s/([0-9]+)/<\1>/

We can use it in matches too, so /the (.*)er they (.*), the \1er we \2/ will match the faster they ran, the faster we ran but not the faster they ran, the faster we ate.

To use parentheses to define an expression without creating a capture group we use the ?: operator, like this (?:some| a few)

Words and Corpora

The next sections of the chapter repeat material that is covered in other reading, there are some helpful definitions though:

An utterance is the spoken correlate of a sentence.

The utterance “I do uh main- mainly business data processing” has two kinds of disfluencies. The broken-off word is a fragment, uh and um are called fillers or filled pauses.

A lemma is a set of lexical forms that share the same stem, the same major part-of-speech, and the same word sense.

The word form is the full inflected or derived form of the word.

For morphologically complex languages we often need to lemmatize, but for English word forms are usually fine for many tasks.

Types are the number of distinct words in a corpus, the size of the vocabulary.

Tokens are the total number of running words.

Dictionaries count lemmas rather than types or tokens, under entries or boldface forms.

The OED had 615,000 entries in 1989. Contrast that with 31,000 types in the works of Shakespare, 38,000 in the Brown corpus and 13 million in the Google N-gram corpus (counting only those with > 40 occurrences).

Herdan’s law states that the vocabulary size for a text goes up significantly faster than the root of its length in words. More precisely:

\(|V| = kN^\beta\)

where k and \(\beta\) are both constants, and \(\beta\) ranges from .67 to .75 in large corpora (between 0 and 1 more generally).

The chapter briefly introduces corpora, pointing out that corpus developers should help users by preparing a data statement that describes properties like motivation (and funding); situation of when the text was created; language variety; speaker demographics; collection process; annotation process; and distribution issues (eg IP).

Text Normalization

The chapter includes a potted version of Church’s Unix for Poets. As the original article is assigned as separate reading elsewhere it’s not summarised here.

Word Tokenization

We often want to break off punctuation as a separate token - commas are useful for parsers, periods etc mark sentence boundaries typically. But we’ll want to keep internal punctuation and other special characters like m.p.h. 1,000 or $39.95.

There are also commonly clitic contractions marked by apostrophes (eg what’re) which can be expanded to two tokens ‘what’ and ‘are’.

A clitic is a part of a word that can’t stand on its own, and can only occur when it is attached to another word.

Tokenization is also intimitely tied to named entity recognition, we may wish to tokenize multiword expressions as one token.

A common tokenization standard is the Penn Treebank tokenization standard. It separates clitics into distinct tokens, keeps hyphenated words together, and separates all punctuation. Tokenization typically needs to be fast, it’s usually the first step in all processing pipelines, so it tends to use deterministic algorithms based on regular expressions that are compiled to a fast FSM. The book shows an example of a pattern and its use in NLTK:

text = 'That U.S.A. poster-print costs $12.40...'

pattern = r'''(?x) # set flag to allow verbose regexps

([A-Z]\.)+ # abbreviations, e.g. U.S.A.

| \w+(-\w+)* # words with optional internal hyphens

| \$?\d+(\.\d+)?%? # currency and percentages, e.g. $12.40, 82%

| \.\.\. # ellipsis

| [][.,;"'?():-_`] # these are separate tokens; includes ], [

'''

nltk.regexp_tokenize(text, pattern)

# ['That', 'U.S.A.', 'poster-print', 'costs', '$12.40', '...']

The chapter discusses issues with languages such as Chinese, where character based tokenization is needed, rather than word based. It also discusses byte-pair encoding for tokenization, where you let the data tell you what the tokens should be.

This is a means of tackling the issue of unknown words. Modern tokenizers often automatically induce sets of tokens that include tokens smaller than words, called subwords, they can be arbitrary or meaningful morphemes. Frequently occurring morphemes, like -er are often split into tokens, allowing the tokenizer to recognize new words with the same morpheme.

Such tokenizers have a token learner that takes a raw training corpus and induces a vocabulary, and a token segmenter that takes a raw test sentence and segments it into the tokens in the vocabulary. Three algorithms are commonly used - byte-pair encoding, unigram language modelling, and WordPiece. There is also a SentencePiece library that includes implementations of the first two.

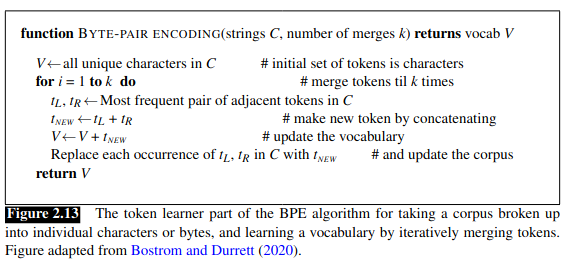

In byte-pair encoding the algorithm starts wtih a vocabulary of the individual characters, then examines the corpus choosing the two symbols that are most frequently adjacent and adds a new merged symbol to the vocab. It replaces all the adjacent characters in the corpus with that new symbol. It continues to do this until k merges have been done creating k new tokens. k is the input parameter to the algorithm, along with whitespace-separated strings (words). This gives the pseudocode:

Word Normalization: Lemmatization and Stemming

Word normalization is the task of putting words/tokens into a standard format, choosing a single normal form for words with multiple forms. This can be helpful for, eg, IR tasks where we want searches for the US to match US, U.S., USA, U.S.A etc…

Case folding is another kind of normalization, helpful for generalization in tasks like IR or speech recognition, but not typically done in tasks like sentiment analysis or classification, information extraction or machine translation, where case can be helpful.

Lemmatization is the process of determining that two words have the same root despite surface differences. Sophisticated lemmatization methods invovle complete morphological parsing of the word. Two broad classes of morphemes can be distinguished stems - the central morpheme of the word, supplying the main meaning, and affixes adding ‘additional’ meaning. eg ‘fox’ is one morpheme, ‘cats’ is two, and a morphological parser will split ‘cat’ from ‘s’.

Lemmatization algorithms can be complex, so we often make do with the simpler, cruder method of stemming. The chapter intros Porter’s stemmer, which is covered elsewhere in the notes. They do commit errors, but can be useful where we need to collapse across variants of the same lemma.

Finally sentence segmentation is another important step. Methods can be rule-based or machine learned. They might involve a dictionary of punctuated abbreviations, which itself could be hand-made or machine learned. In the Stanford core NLP, for example, sentence splitting is rule based and a consequence of tokenization, a sentence ends with sentence ending punctuation where it is not already grouped with other characters into a token.

Minimum Edit Distance

Finally the chapter introduces the Minimum Edit Distance algorithm, covered in a separate note.