Evolutionary Algorithms (Russell Norvig)

As discussed in Russell Norvig Chapter 04: Searching Complex Environments

Definition

Evolutionary algorithms can be seen as variants of stochastic beam search that are explicitly motivated by the metaphor of natural selection in biology: there is a population of individuals (states), in which the fittest (highest value) individuals produce offspring (successor states) that populate the next generation, a process called recombination. (p. 133)

the principal difference between stochastic beam search and evolution is the use of sexual reproduction, wherein successors are generated from multiple individuals rather than just one. (p. 136)

A point of distinction from biological evolution: In biological evolution genes themselves encode the mechanisms whereby the genome is reproduced and translated into an organism. In genetic algorithms, those mechanisms are external, not represented in the strings.

Things that are hard for an organism to learn from the environment end up in the genome, but things that are easy to learn need not reside there.

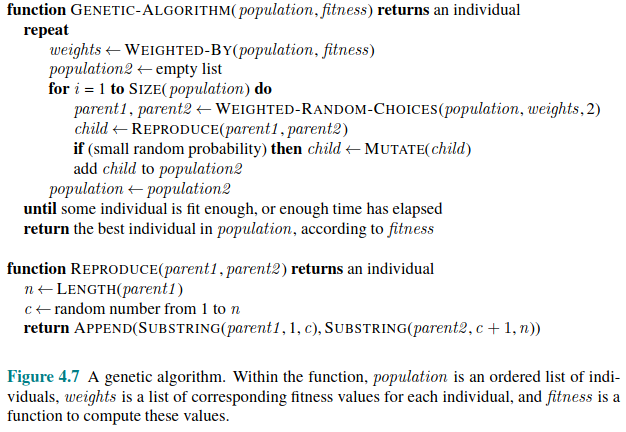

Pseudocode

Forms of Evolutionary Algorithms

There are endless forms of evolutionary algorithms that vary in the following ways:

The size of the population

The representation of each individual. In genetic algorithms, each individual is a string over a finite alphabet (often Boolean), like DNA. In evolution strategies an individual is a sequence of real numbers, and in genetic programming an individual is a computer program.

The mixing number p, which is the number of parents that come together to form offspring. Typically \(p=2\). When \(p=1\) we have a stochastic beam search (or asexual reproduction). It is possible to have \(p>2\).

The selection process for selecting the individuals who will become parents. One possibility is to select from all individuals with probability proportional to fitness [the roulette wheel in MYK’s lectures], or else select n individuals at random, and then choose the p most fit of those as parents.

The recombination procedure. Common to select at random a crossover point to split each of the parent strings and recombine the parts to form two children - one with the first part of parent 1 and the second part of parent 2, and vice versa. [note this is different from the MYK lecture where they produce just one offspring].

The mutation rate, determining how often offspring have random mutations to their representation. Once an offspring is generated every bit in its composition is flipped with probability equal the mutation rate.

The makeup of the next generation. This can just be the new offspring, or include some top-scoring parents from the prior generation (elitism which guarantees the overall fitness never decreases). The practice of culling, in which individuals below a threshold are discarded, can speed up the search.

Schema

Genetic algorithms are similar to stochastic beam search, but with the addition of the crossover operation. This is advantageous if there are blocks that perform useful functions. For example, in an 8 queens puzzle, it could be that there is a block of positions for a few queens that increase the likelihood of success in combination with an other block. “It can be shown mathematically that, if the blocks do not serve a purpose - for example if the positions of the genetic code are randomly permuted - then crossover conveys no advantage” (p. 135)

The theory of genetic algorithms explains how this works using the idea of a schema, which is a substring in which some of the positions can be left unspecified. For example the schema 246***** describes all states of the 8 queens board where the first three positions are fixed. Strings in the set that match the schema are called instances of the schema.

It can be shown that if the average fitness of the instances of a schema is above the mean, then the number of instances of the schema will grow over time… Genetic algorithms work best when schemas correspond to meaningful components of a solution. For example if the string is a respresentation of an antenna, then the schemas may represent components of the antenna, such as reflectors and deflectors. A good component is likely to be good in a variety of different designs. This suggests that successful use of genetic algorithms requires careful engineering of the representation. (pp 136-7).

Assessment

In practice, genetic algorithms have their place within the broad landscape of optimization methods… particularly for complex structured problems such as circuit layout or job-shop scheduling, and more recently for evolving the architecture of deep neural networks… It is not clear how much of the appeal of genetic algorithms arises from their superiority on specific tasks, and how much from the appealing metaphor of evolution. (p. 137)