NLTK Book Chapter 03: Processing Raw Text

Metadata

Title: Processing Raw Text

Number: 3

Core Ideas

Previous chapter showed working with built-in corpora, but most likely you want to work with your own.

The goal of this chapter is to answer the following questions:

How can we write programs to access text from local files and from the web, in order to get hold of an unlimited range of language material?

How can we split documents up into individual words and punctuation symbols, so we can carry out the same kinds of analysis we did with text corpora in earlier chapters?

How can we write programs to produce formatted output and save it in a file?

Accessing text

Shows an example of fetching a .txt file from guttenberg:

from urllib import response

# grab the text and decode it

url = 'http://www.gutenberg.org/files/2554/2554-0.txt'

response = request.urlopen(url)

raw = response.read().decode('utf8')

# tokenize and create an nltk Text object

tokens = word_tokenize(raw)

text = nltk.Text(tokens)

# now we can perform nltk operations

text.collocations()

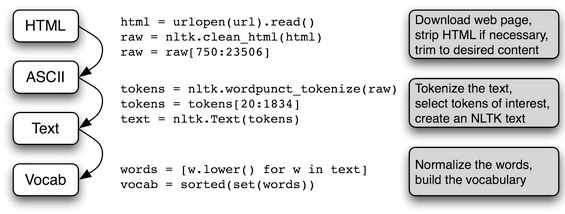

Then shows using beautiful soup to extract text from an html document, and feedparser to fetch content via an RSS feed. Shows opening files to access local text. Summarizes in a pipeline:

String Methods

Reviews string operations

| Method | Functionality |

|---|---|

| s.find(t) | index of first instance of string t inside s (-1 if not found) |

| s.rfind(t) | index of last instance of string t inside s (-1 if not found) |

| s.index(t) | like s.find(t) except it raises ValueError if not found |

| s.rindex(t) | like s.rfind(t) except it raises ValueError if not found |

| s.join(text) | combine the words of the text into a string using s as the glue |

| s.split(t) | split s into a list wherever a t is found (whitespace by default) |

| s.splitlines() | split s into a list of strings, one per line |

| s.lower() | a lowercased version of the string s |

| s.upper() | an uppercased version of the string s |

| s.title() | a titlecased version of the string s |

| s.strip() | a copy of s without leading or trailing whitespace |

| s.replace(t, u) | replace instances of t with u inside s |

Review unicode encoding and decoding (briefly) and then looks at regular expressions. Includes an interesting proto-stemmer using regular expressions.

Shows the regex formulation "<a> <man>" where the angle brackets denote token boundaries and whitespace between the angle brackets are ignored (this is specific to nltk’s findall method for texts. For example to find all three word phrases ending with “bro” we can use nltk like this:

chat = nltk.Text(corpus.words())

chat.findall(r"<.*> <.*> <bro>")

Normalization

Presents the nltk stemmers - Porter and Lancaster. Advises to use them rather than rolling your won. Stemming is not well-defined and we pick teh one that best suits the application we have in mind.

Porter stemmer is a good choice if indexing some texts and want to support search using alternative word forms.

WordNet lemmatizer is a good choice if you want to compile the vocabulary of some texts and produce a list of valid lemmas. It checks against its own dictionary.

Tokenization is also surprisingly difficult, and no single solution is applicable across the board. we have to decide what should count as a token depending on the domain.