Word Sense Disambiguation (Jurafsky Martin)

As discussed in Jurafsky Martin Chapter 18: Word Senses and WordNet

The task of selecting the correct sense for a word is called word sense disambiguation, or WSD. WSD algorithms take as input a word in context and a fixed inventory of potential word senses and outputs the correct word sense in context.

The inventory of sense tags depends on the task - for automated indexing of medical articles we might use the set of MeSH (medical subject headings) thesaurus entries, in other tasks we might use WordNet senses, or for a translation task we might use a set of different translations in the target language.

In situations where we need to disambiguate a small number of words, called lexical sample tasks, we can use a pre-selected set of target words and an inventory of senses from a lexicon. Supervised classification approaches work well here.

More commonly though is the harder problem of disambiguating all words in some text, an all words task. Here the system gets the entire text and a lexicon of senses for every word, and has to disambiguate every word in the text. This is similar to part-of-speech tagging, but with a larger set of tags since every lemma has its own tag set.

Supervised all-word disambiguation tasks are generally trained from a semantic concordance, a corpus in which each open-class word in each sentence is labeled with its word sense from a specific dictionary or thesaurus, often WordNet. Eg the SemCor corpus.

WSD systems are usually evaluated intrinsically, computing F1 against hand-labeled sense tags in a held-out set.

A surprisingly strong baseline is to use the most frequent sense for each word, from the senses in a labeled corpus. For WordNet this is the first sense, since WordNet orders its senses by frequency counts in SemCor.

A second heuristic is one sense per discourse, based on the observation that a word often appears with the same sense in a text.

WSD with Contextual Embeddings

The best performing WSD algorithm is 1-nearest neighbour using contextual word embeddings.

At training time, we pass each sentence in the SemCore labeled dataset through a contextual embedding (eg BERT) resulting in a contextual embedding for each labeled token in Semcore. Then for each sense s of any word in the corpus, for each of the n tokens of that sense, we average their n contextual representations \(v_i\) to produce a contextual sense embedding \(\mathbf{v_s}\) for s:

\[\mathbf{v}_s = \frac{1}{n} \sum_i \mathbf{v}_i \; \forall \mathbf{v}_i \in \textrm{tokens}(s)\]

Then at test time, gien a token of target word t in context, we compute its contextual embedding t and choose its nearest neighbour sense from the training set: ie the sense whose sense embedding has the highest cosine with t:

\[\mathrm{sense}(t) = \underset{s \in \mathrm{senses}(t)}{\mathrm{argmax}} \cos (\mathbf{t},\mathbf{v}_s)\]

What about words that we haven’t seen in the sense-labelled training data? The simplest thing would be to fall back to the most frequent sense, doesn’t seem great though.

There’s a more powerful approach: Impute the missing sense embeddings, bottom-up, using the WordNet taxonomy and super senses and take a back-off approach.

We can get a sense embedding for any higher-level node in the WordNet taxonomy by averaging the embeddings of its children, for example:

The embedding for a synset is the average of its sense embeddings

The embedding for a hypernym is the average of its synset embeddings

The embedding for a supersense (category) is the average of the large set of synset embeddings with that category

So if we find a missing sense we can then go up the hierarchy to look for the best approximation. This is formally modelled in the book. Since all the supersenses in WordNet have some labeled data in SemCor, it’s guaranteed to give some representation for all senses, but very coarse at the last resort level.

Lesk Algorithm

An alternative approach, that doesn’t rely on sense labeled corpora, is the class of knowledge-based algorithms that rely solely on WordNet or other resources. Supervised algorithms generally work better, but knowledge-based methods can be used for languages or domains where thesauruses or dictionaries but not sense labeled corpora are available.

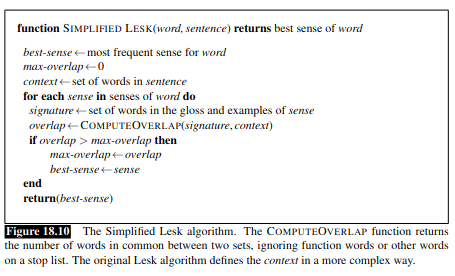

The Lesk algorithm is the oldest and most powerful, and a useful baseline. It is a family of algorithms that choose the sense whose dictionary gloss share the most words with the target word’s neighbourhood. Here is the simplest version, called Simplified Lesk:

We can extend Simplfied Lesk, for example by IDF weighting to downweight frequent words. Best performing is to use word-embedding cosine instead of word overlap to compute the similarity between definition and context.

Modern neural extensions of Lesk use the definitions in dictionary resources to compute sense embeddings that can be directly used instead of SemCor-training embeddings.

Word-in-Context Evaluation

A related task, sitting somewhere in between WSD and context-free similarity, is the word-in-context task, where a system is given two sentences, each with the same target word but a different sentential context. The system must decide whether the target words are used in the same or different senses.

The baseline algorithm to solve WiC uses contextual embeddings with a threshold cosine. We compute the contextual embeddings for the target word in each of the two sentences, then compute the cosine between them. If it’s above a threshold tuned on a devset we return true, otherwise false.