Jurafsky Manning Lecture Summaries: Topic 11: POS Tagging

Relevant to cm3060 Topic 07: Information Extraction

11.1: Introduction to Parts of Speech and POS Tagging

In the West, the idea of lexical categories or “parts of speech” dates back to Aristotle.

The list of 8 parts of speech dates back to Dionysius Thrax (c. 100 BC), but his list is not exactly the ones we’re now taught:

Thrax: noun, verb, article, adverb, preposition, conjunction, participle, pronoun

School grammar: noun, verb, adjective, adverb, preposition, conjunction, pronoun, interjection

In Thrax there is no adjective for example. The status of article is controversial, they are often lumped into adjectives, but behave differently.

When we work with modern POS tagging pipelines we work with more than just 8 categories, because there are distinctions that are useful for downstream tasks.

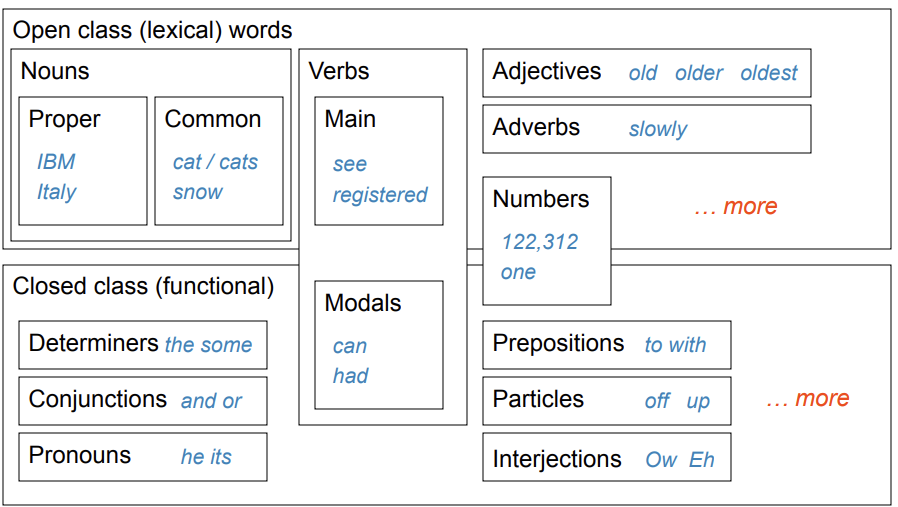

We might distinguish between proper and common nouns for example, main and modal verbs. Determiners take over from articles for example.

One top level distinction is between open and closed classes. Some POS are occupied by small sets of words, like pronouns. There aren’t typically new pronouns invented, but rarely there might be (eg gender-neutral pronoun). These are closed classes.

Alternatively categories like nouns and verbs are open, it’s very common for new words to be added to the class.

The task of POS tagging is to assign the correct POS to every token in the input. This is not trivial because some words like back might have multiple possibilities.

The most commonly used tags are Penn Treebank POS tags, there are 45 of them. Uses include text-to-speech (who do we pronounce “lead”).

You can use them to write regex to output noun phrases for example. You can also use them to speed up a full parser.

If you know the tag, you can back off to it in other tasks like language modeling.

Performance is evaluated by accuracy, modern models are usually around 97% accurate. Baseline is already 90+ though.

Many words are unambiguous, and you get points for every token, like punctuation!

Some POS decisions are hard even for humans.

Only around 11% of word types in the Brown corpus are ambiguous with regard to POS. But they are very common words, such that around 40% of word tokens are ambiguous.

11.2: Methods for Sequence Models for POS Tagging

What are the main sources of info for POS tagging?

Knowledge of neighbouring words and their POS tags. Sequence information can rule out possibilities that are invalid. This was the main source of evidence in the early days but was then superseded by probabilistic methods.

Knowledge of word probabilities turns out to be the most useful, but the former also helps.

So we want to combine features into a feature based tagger.

We can go a long way with just features extracted from the word itself:

Word: The word itself the: the (it’s a DT)

lowercased word: in case we haven’t seen it capitalized before Importantly: importantly

Prefixes: unfathomable: un

Suffixes: Importantly: ly

Capitalization: if away from the beginning it’s a clue that you have a proper noun

Word Shape features: as discussed in Jurafsky Manning Lecture Summaries: Topic 09 Relation Extraction

Then you can build a maxent model to predict the tag. Even without any context you can get around 94% of words right, and does ok on unknown words (83%).

The upper bound appears to be 98% (human agreement). A trigram HMM might produce 95%; MEMM, 97%; Bidirectional dependencies 97%.

(In later recorded lectures, Jurafsky suggests BERT based models are about the same).

The process of improvement involves staring at your output where it goes wrong and thinking of what information you might encode in features to help give the model that information.

When sequence models first arrived, everyone went crazy for them, but often it actually gives you very little information and additional benefit.